What Would Montessori Do?

On LLMs, the Zone of Proximal Development, and why help must be given only when necessary

Full disclosure. I think technology in classrooms is the devil. Bring out the salt, the wooden stakes, and the garlic. I have ranted about robots replacing teachers. I will probably rant about it again.

But something happened at a Montessori conference recently and I’m learning to think differently.

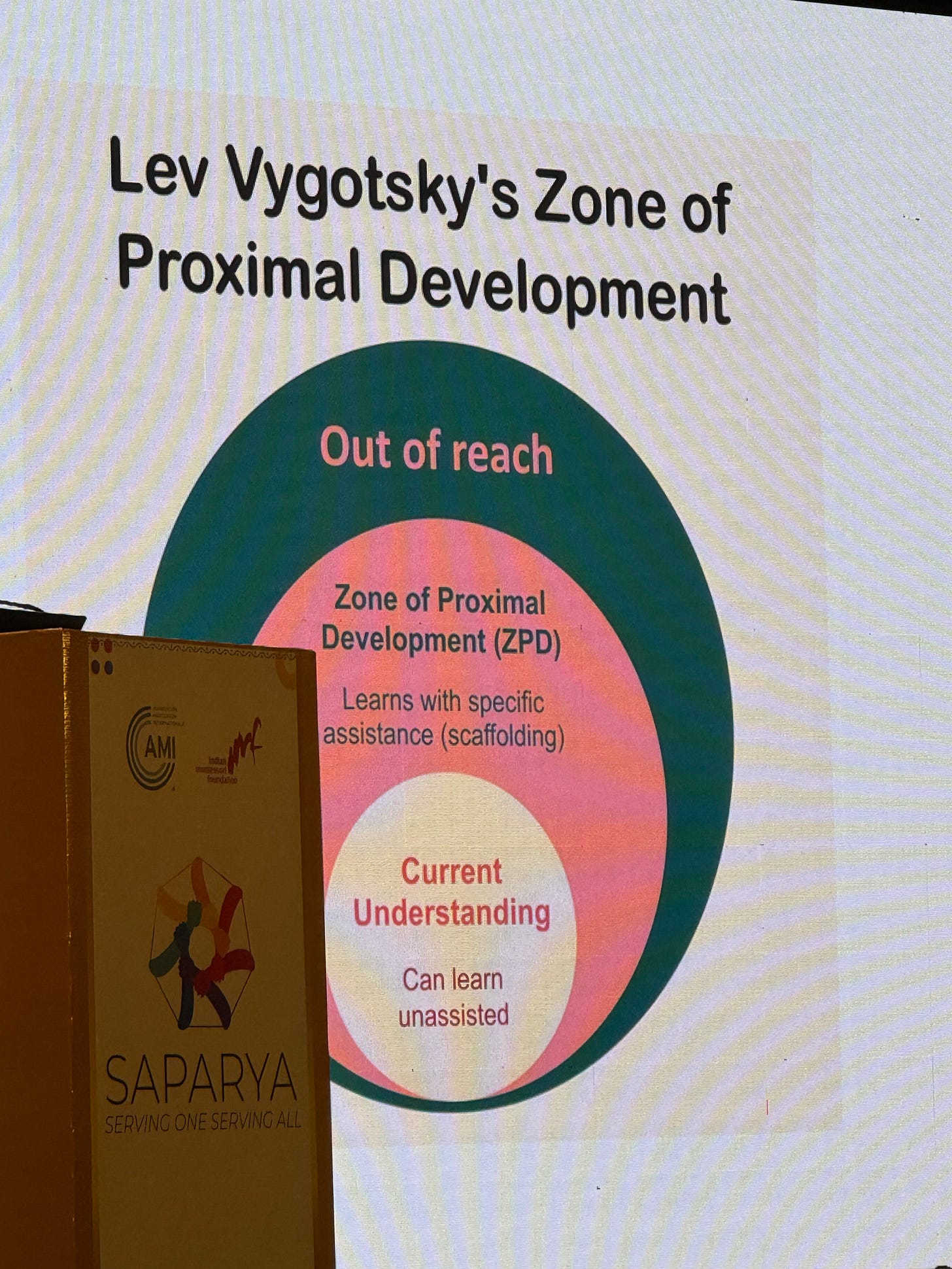

Neelima Mhaskar gave a talk on “Education for Life” and she brought up Vygotsky’s Zone of Proximal Development. If you’re not familiar, picture three concentric zones. The inner zone is where you’re comfortable. You already know the stuff, nothing new is happening. The outer zone is where things are impossibly hard, where you’re drowning and nothing sticks. And then there’s the sweet spot in between, just outside your comfort zone, where real learning actually happens.

Here’s the catch. You can’t make much progress in that zone alone. You need guidance. A nudge. Someone who knows a little more than you, giving you clues and asking questions without handing you the answer. This is what a good teacher does. This is what a Montessori guide is trained to do.

We have decades of data on this. Active learning - actually grappling with problems using your hands and your mind - is what makes knowledge stick in your brain. Not watching someone else do it. Not copying an answer. Doing the work yourself, with just enough support to keep you from falling apart.

The way most kids use LLMs right now is the exact opposite of everything we know about how learning works. A friend told me that she observed a child using Perplexity in the school’s laptop and just typed ‘<insert city name> research,’ and I thought I was having a stroke.

Imagine this: A child takes a picture of their homework. Uploads it to ChatGPT. Gets the answer. Moves on. Their brain encodes precisely nothing. If I’m being honest with myself, if I were in school today I would absolutely do this. I would not be able to resist the temptation. And millions of kids can’t either. The current implementation of AI in education is an active learning destruction machine.

But what if it didn’t have to be?

WWMD: What Would Montessori Do

I’ve been turning an idea over in my head. A friend has been using Claudebot to be his personal assistant and is building other agents to do other things. What if someone built a version of Claude (or any LLM) that was coded entirely from Montessori principles? Trained on her writings. Designed to behave like a prepared guide rather than an answer engine.

Picture this: An 8-year-old takes a picture of her math homework and uploads it. Instead of spitting out the answer, WWMD (AI agent) does what a guide would do. It looks at where the child is. It asks a question. It gives the smallest possible hint. It waits. It offers help only when necessary and not a moment before.

Think Mr. Miyagi. Wax on, wax off. The kid thinks he’s doing pointless busywork, but the lesson is hiding inside the struggle. The best guides work the same way. They don’t explain the destination. They design the path so you can’t help but arrive there yourself.

The computer becomes the prepared environment. Everything the child needs is available, but nothing is simply handed over.

For kids who already have access to good schools, this is a nice supplement. But think about the children who don’t. Kids who can’t attend school for health reasons, or kids in places where quality education simply isn’t available. For them, an LLM trained to guide rather than answer could be something close to a lifeline.

This thought process started after my friend Shibani (of Innahouse fame) and I had lunch and she told me about her work with children undergoing cancer treatment and how it affects them psychologically. It got me thinking about their education.

The Cruelty of Not Helping

I need to tell you something about what it feels like to be a Montessori adult. We are trained to offer the least amount of help possible, and only when necessary. In practice, this feels cruel. You watch a child struggle and every instinct in your body screams to jump in and fix it for them. And then when you do step in, that feels cruel too, because you might have just stolen the exact moment where they would have figured it out on their own.

I’m sure many practitioners have found that happy middle ground. I’ve got a few more years before I get there.

But this tension, this constant judgment call of when to step in and when to hold back, is the entire art of teaching. It requires reading the child. Knowing their frustration threshold. Seeing the light in their eyes or the defeat in their shoulders.

A machine will never know the soul of a child. It can’t observe the way a guide sitting three feet away can. It can’t read body language or sense when someone is about to give up versus about to have a breakthrough. It can’t love them.

But it can be programmed to never just hand over the answer. It can ask before telling. It can scaffold. It can meet a child in their zone and nudge them forward. And for the millions of children who currently have no guide at all, that is not nothing.

I care deeply about humans not losing the ability to think well and critically about the world around them. I also think we shouldn’t be afraid of technology.

These two things don’t have to be in conflict.

The question isn’t whether AI belongs in education. It’s already there, and kids are using it to skip the hard part. The real question is whether anyone will bother building AI that does what the best teachers do.

Help only when necessary. And not a moment before.

There's a book I read "The Flickering Mind" it's a little outdated now, but it gets at why technology doesn't help students. It's worth a thumb through.

Also, more than anything else, Maria Montessori would OBSERVE what worked and then incorporate that into her work. Montessori education has always been one of empiricism.

We've actually built out exactly this at PEP.. Happy to chat.